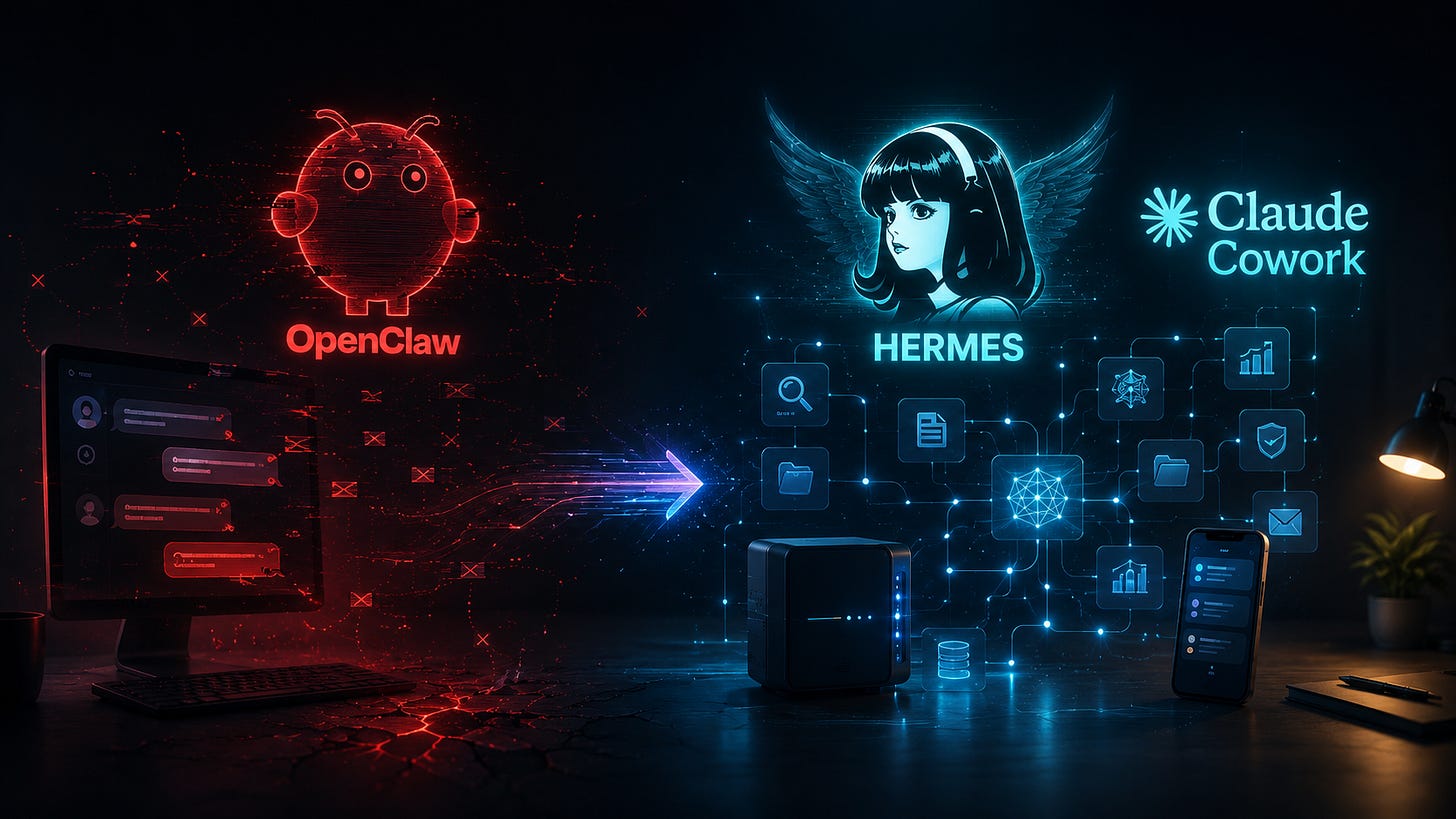

I Killed My OpenClaw Agent. Here's What I Run Instead.

I launched an OpenClaw agent earlier this year. It didn’t work.

And I don’t mean “needed some configuration tweaks.” I mean it broke. Constantly. Every time OpenClaw pushed an update (which was basically every day), something else fell over. A skill I’d built would stop firing. A connection to my messaging app would drop. A workflow that worked on Tuesday would get stuck on Thursday, repeating the same step over and over instead of finishing.

It took me a few weeks to admit that the problem wasn’t me. The thing was just unstable.

If you’re not in the agent space, here’s the quick deets on OpenClaw. It launched as Clawdbot, racked up one hundred thousand GitHub stars in its first week, triggered a global Mac mini shortage (my husband flew from Bali to Jakarta to track down two of the last ones in the country), got rebranded twice (first to Moltbot, then to OpenClaw after Anthropic objected to the original name), and became the project everyone was talking about in late January. It promised to turn your computer into a self-running agent that could read your messages, run skills, and operate semi-autonomously across platforms.

What it actually delivered was a daily reliability problem.

The GitHub issue tracker tells the story. By May 12 the project had crossed eighty-one thousand filed issues, with new “regression” bugs (the polite label for “this worked yesterday and now it doesn’t”) landing daily. The official docs have an entire troubleshooting page dedicated to specific failure modes. A third-party blog called “Why Your OpenClaw Agent Is Not Working” exists specifically because so many people hit the same wall I did. And Cisco published a long technical breakdown in January concluding that OpenClaw “fails decisively” on security, with hundreds of administrative interfaces sitting wide open on the public internet because the default configuration trusts any connection that looks local.

The agent doesn’t self-improve. It can’t learn from its own mistakes. Every patch that ships can quietly break the workflow you built the week before. And there is no internal mechanism for the system to notice that and adjust.

I shut mine down.

What Actually Works

Then I tried Hermes.

Hermes was built by Nous Research and released earlier this year. Same category (autonomous agent that lives on a server and runs across messaging platforms), completely different posture. Where OpenClaw is a flashy public project trying to keep pace with its own hype cycle, Hermes is built around one specific architectural choice that changes everything.

It has a built-in learning loop.

When Hermes solves a hard problem, it writes the solution as a reusable skill. The next time it encounters that situation, it doesn’t start from zero. It pulls the skill. It remembers preferences and projects across sessions. It can search its own past conversations. It builds a deepening model of how I work over time, so I stop having to re-explain context every few days.

Most of what makes AI feel like a wasted investment is having to re-explain yourself constantly. Every new chat starts blank. Every workflow has to be rebuilt by hand. Hermes is the first agent I’ve used where the inverse is true. I’ve only been running it for about three weeks, and it’s already noticeably more useful than it was on day one.

I run mine on one of the Mac minis my husband flew to Jakarta for. It reaches me through Telegram. It handles research tasks, drafting work, scheduling reminders, and a handful of routine ops jobs that used to live on my own plate. It also took everything my OpenClaw agent was doing well, ported it over, and improved the functioning by over fifty percent. Same hardware. Same use case. Different architecture, and you can feel the difference in the output.

The third one is Claude Cowork. I use it daily for agentic tasks inside my actual workflow. File management, document review, multi-step project execution. The agent doesn’t just answer a question and stop. It plans, executes, checks itself, and reports back. If you’ve been using Claude on the desktop in 2026, you’ve probably already crossed into agent territory without noticing the line.

So that’s where I’ve landed. One agent I shut down because it kept breaking, one I’m running daily that keeps getting better, and one I’m already inside of without thinking about it. What separates the ones that work from the one that didn’t comes down to whether the system can learn from what it’s already done. OpenClaw can’t. Hermes can. Claude Cowork can.

Here’s What You Need To Know

This is the biggest story in AI right now and almost no one is putting it in plain language for non-technical entrepreneurs.

Anthropic’s Model Context Protocol, the underlying plumbing that lets agents connect to tools and data, crossed ninety-seven million installs in March. Every major AI provider now ships MCP-compatible tooling. Apple just announced it’s opening iOS to let users choose third-party AI providers, including Anthropic, to power native features. Anthropic also introduced a new technique called “dreaming” that lets autonomous agents review their own past behavior between sessions and improve. The Stanford AI Index, released a few weeks ago, found that frontier companies are now using three-and-a-half times more AI intelligence per employee than typical firms. The gap is in agentic workflows specifically.

Translation. The bar moved.

Six months ago, “using AI” meant typing a question into ChatGPT and getting an answer. The leverage has already moved past that. It now lives in systems that run on their own, learn over time, talk to your tools, and execute multi-step work without you sitting there prompting them turn by turn.

Most of your competitors are still typing one-off prompts into a chat window and calling that their AI strategy. They have no idea the ground has already moved underneath them.

What This Means If You Run a Business

You don’t need to launch your own OpenClaw clone tomorrow. You don’t need to learn to code. You don’t even need to install Hermes yourself (though if you’re a builder, you should absolutely look at it).

What you do need is to understand that the chatbot era you spent the last two years getting comfortable with is closing. Agentic systems are the new ground floor. The work now is learning what an agent actually is, what it does, how it differs from prompting, and how to evaluate one before you commit to it. The gap between the businesses that understand this shift and the ones that don’t is widening fast, and it’s not going to wait for anyone to feel ready.